You Can't Catch Up on AI by Throwing Money at It

Companies are used to buying their way out of problems. Hire consultants, acquire a startup, throw budget at it. That won't work this time. Here's why.

Fabian Mösli

Fabian Mösli Reading Preferences

Key Takeaways

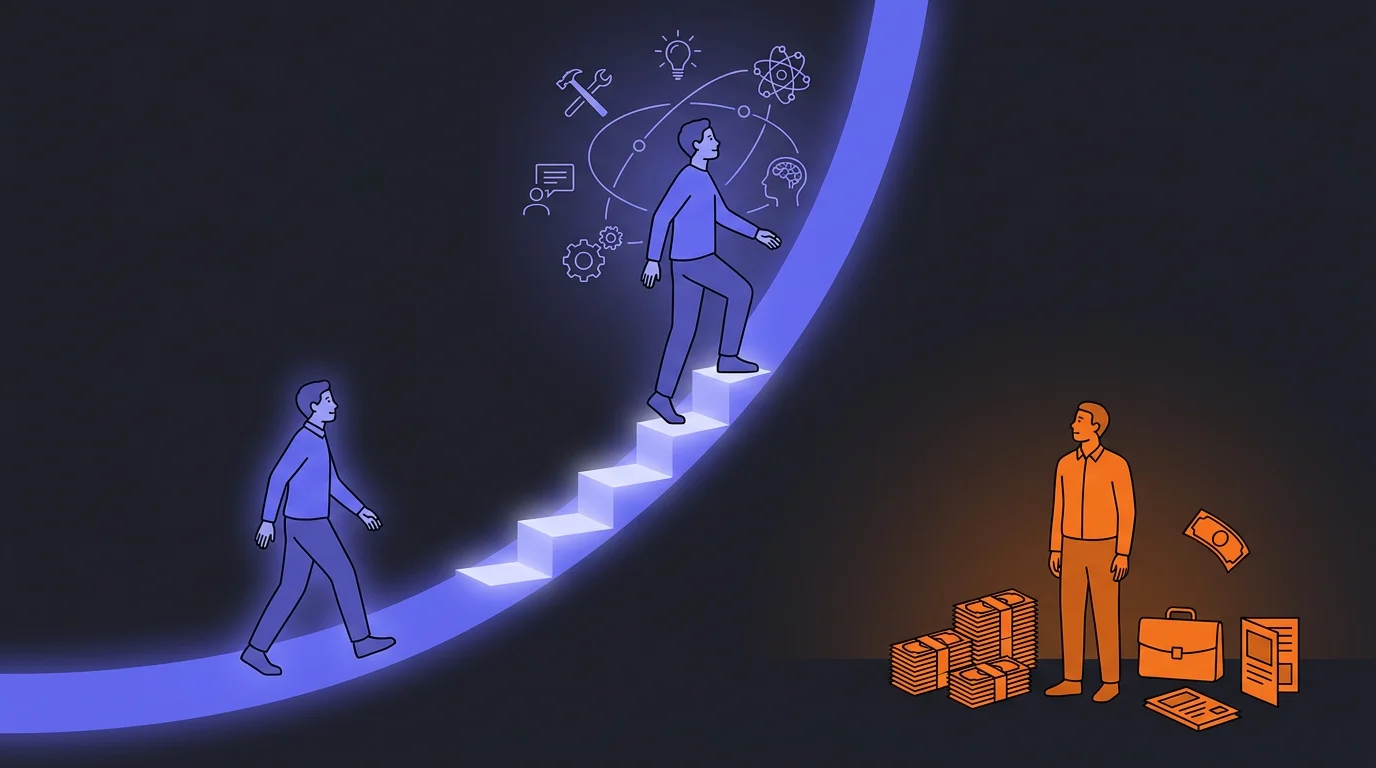

- • Money cannot buy back lost time: Unlike traditional trends, you cannot catch up on AI by hiring consultants or acquiring startups in six months. Hands-on learning compounds exponentially—early adopters are in a different league.

- • Real AI expertise is not for sale: The top practitioners who actually understand how to build autonomous agent networks are busy running businesses and creating their own companies, not selling their time as external consultants.

- • The speed difference is astronomical: A tiny, AI-native startup team can accomplish in a few hours what takes a traditional company months to execute. You must get in the trenches, start small, and build a compounding advantage today.

In this guide

Here’s something most companies don’t want to hear: if you’ve been sleeping on AI, you can’t just write a check to fix it.

This might sound dramatic. It might even sound like fear-mongering from someone trying to sell you something. I promise you it’s neither. Let me explain why this time is different.

The Playbook That Won’t Work Anymore

Companies are used to catching up. It’s practically a business strategy: wait for a new trend to prove itself, then hire consultants, acquire a startup, or throw budget at it. You might be six months behind, maybe a year, but money closes the gap. It always has.

I don’t think that works with AI. Here’s why.

Learning compounds

AI knowledge doesn’t grow linearly. It grows exponentially. Every week you spend learning, experimenting, and building makes the next week more productive. You develop intuitions. You discover combinations. You build on yesterday’s insight to have today’s breakthrough.

This is what “compounding” means in practice: the person who started six months ago isn’t six months ahead of you. They’re in a completely different league. Their mental models are richer. Their instincts are sharper. They see possibilities you can’t even imagine yet — not because they’re smarter, but because they’ve been in the trenches longer.

And the gap gets wider every day, not narrower.

The knowledge isn’t for sale

Here’s the second problem: the people who really understand how to build AI-first organizations — the ones who’ve built the systems, developed the workflows, created the knowledge architectures — they don’t need to do consulting. They’re too busy running businesses.

Think about it. If you genuinely know how to set up a swarm of AI agents that can do in hours what a traditional team does in months, why would you sell that knowledge as a consultant for a day rate? You’d use it to build something of your own. You’d start companies. Some people can even run multiple businesses essentially on their own, with AI doing the heavy lifting.

So when the big consultancies come knocking and offer you an “AI transformation package” — ask yourself: do the people designing this package actually use AI the way the best practitioners do? Or are they repackaging frameworks they learned from reading about it?

It’s easier to go one direction than the other

Here’s something that keeps me up at night. It’s much easier for a great tech company to become any other kind of company than the other way around.

An AI-first tech company that wants to enter insurance can learn the insurance business. They can acquire that knowledge, hire domain experts, feed it into their AI systems, and move surprisingly fast. They already know how to build, iterate, and use AI.

But an insurance company that wants to become tech-first? That’s a fundamentally harder transformation. The culture is different. The talent is different. The pace is different. Everything needs to change, and most of it has nothing to do with technology.

Claire put this better than I ever could. Startups have always been faster than incumbents — they had more to win, more to lose, more hunger. But the speed difference has changed to a degree that’s genuinely hard to comprehend. An AI-native team can accomplish in a few hours what takes a traditional company months. Not because they work harder. Because they work in a fundamentally different way.

”But Surely It’s Not That Urgent”

Maybe. I could be wrong about the timeline. I could be too dramatic about the scale. But consider this:

Paul Krugman — Nobel laureate, one of the most respected economists alive — predicted in 1998 that the internet’s economic impact would be “no greater than the fax machine’s.” He was writing about something he didn’t understand deeply enough, and he was spectacularly wrong.

Every business leader who’s currently dismissing AI as “just a productivity tool” or “not relevant to our industry” should ask themselves: am I making predictions based on deep, hands-on understanding? Or am I, like Krugman, making confident statements about something I haven’t spent enough time with?

The only honest way to know what AI can and can’t do is to use it seriously. Not to read about it. Not to attend a conference about it. To actually be in the trenches, building, experimenting, failing, learning.

How to Know If You’re Making Progress

Alright, let’s say you’ve started. You’re following the pull-based approach. People are experimenting. Things are happening. How do you know if it’s actually working?

This is a genuinely hard question, and I haven’t found many people with good answers to it. Here’s what I’ve learned so far.

Early on: anecdotal evidence is fine

In the first weeks and months, you’re looking for stories. Concrete examples of someone using AI to do something meaningfully better or faster.

At Carewell, I asked the early participants to share what they’d been doing with AI in our company chat. Not formal reports — just casual messages. “Used Claude to prepare for the client meeting today, it pulled together everything from our last three interactions in 30 seconds.” That kind of thing.

This serves two purposes. First, it gives you visibility into what people are actually doing. Second — and this is the more important one — it creates the pull effect. When the CEO writes that he created an accelerator application in 30 minutes instead of four hours, other people notice. When he pulls live business metrics during a leadership meeting by just asking a question in plain English instead of building a dashboard — people’s eyes go wide.

These moments are your evidence. Collect them.

Watch for unsolicited interest

The strongest signal that your AI initiative is working is when people who weren’t involved start asking to be. That’s pull. That’s genuine demand. No survey or KPI can capture this as accurately as simply paying attention to who’s knocking on your door.

Track what you can, but don’t over-engineer it

As your usage grows, you can start tracking things:

- How many people are actively using your AI tools

- Token usage and whether people are hitting their limits (a good sign, actually)

- Contributions to your knowledge base

- Specific time savings people report

But don’t build a huge analytics dashboard before you have something to analyze. Early on, talk to people. Do informal interviews. Ask: “What’s working? What isn’t? What surprised you?”

Watch out for silent quitters

The biggest risk isn’t dramatic failure — it’s quiet abandonment. People try AI once or twice, don’t get immediate value, and silently stop using it. You won’t see this in any dashboard. You’ll only catch it by staying close to your team and having real conversations.

If your first round of participants struggles to get value, you have a problem. It means either the tools aren’t set up well enough, people need more support, or the use cases aren’t right. Fix it before you expand.

The Compounding Advantage in Practice

Let me make the compounding thing concrete. Here’s what it looked like at Carewell over just a few months:

Month 1: I set up a basic knowledge system. It knows our company, our product, our market. It’s useful but limited. I’m the only one using it seriously.

Month 2: I’ve added competitor analysis, client insights, regulatory information, and product screenshots. The AI now answers questions about our business with genuine depth. My own productivity has noticeably increased.

Month 3: The CEO and two other team members start using the system. Each of them feeds in knowledge from their domain. The system starts making connections across departments that no single person would make. The CEO uses it in leadership meetings. Others start asking to join.

Month 4: The system has been fed hundreds of pieces of company knowledge. New team members can get up to speed dramatically faster. The AI catches contradictions between what different people believe. It flags when knowledge is getting stale. It’s becoming genuinely hard to imagine working without it.

Each month built on the previous one. The early investment was slow. The returns accelerated. That’s compounding.

What This Means for You

I know this might sound overwhelming. “Great, so I’m already behind and the gap is growing.” That’s the bad news.

The good news: you can still start. The compounding works in your favor the moment you begin. Today is the earliest you’ll ever be.

You don’t need to build a massive system on day one. You don’t need to hire an AI team. You don’t need a budget. Start with the mental model shift: understand that AI is a brilliant colleague who knows nothing about your business, and start teaching it. Start with one tool, one use case, one small win.

Then build from there. Build a Company AI Operating System. Find your curious people and let them lead the way. Feed the system. Watch it compound.

The worst thing you can do right now isn’t starting small. It’s not starting at all.

Published: 2026-02-26

Last updated: 2026-02-26