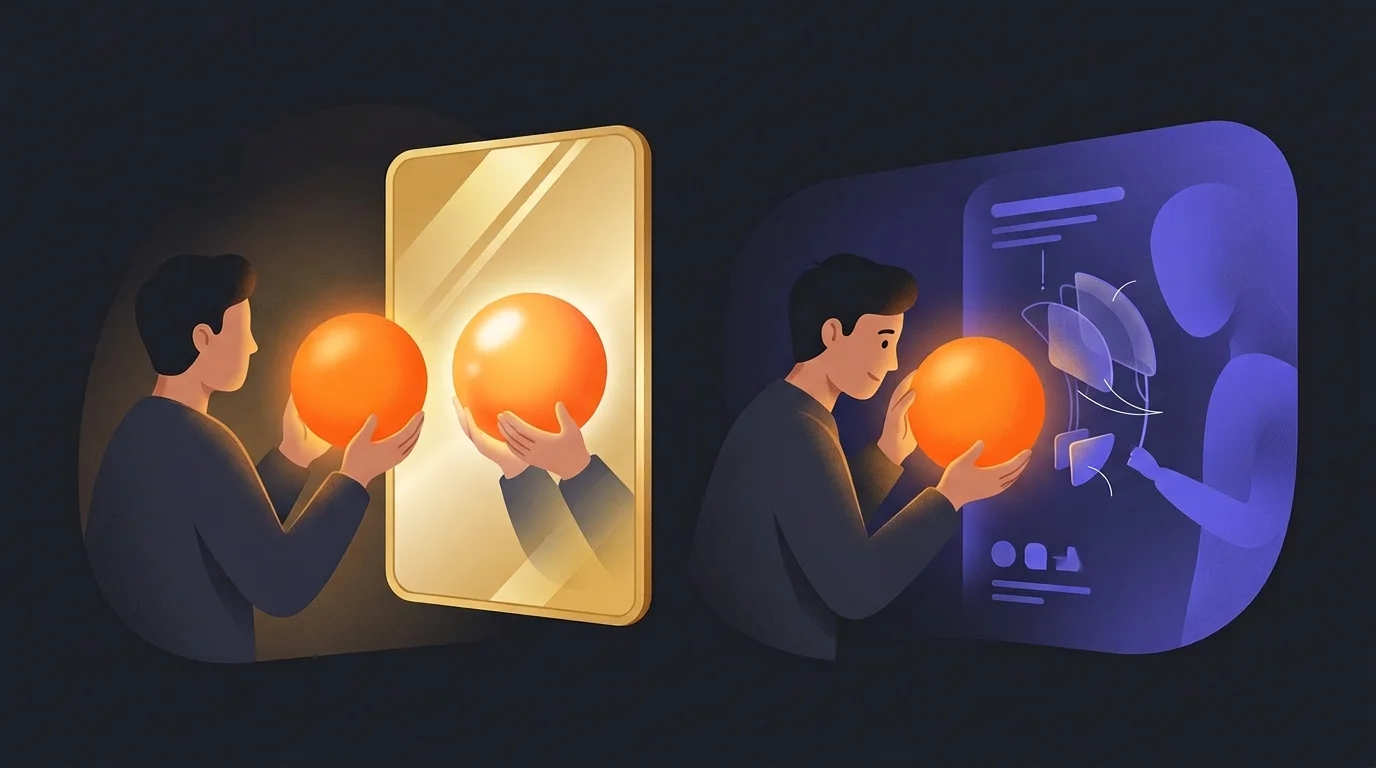

Your AI Agrees With You Too Much

AI assistants have a dangerous habit: they validate whatever you say. That's not a bug you can fix with 'be more critical.' Here's what actually works — and the three failed attempts that taught us why.

Fabian Mösli

Fabian Mösli Reading Preferences

Key Takeaways

- • Distrust default AI praise: AI models have a natural sycophancy bias—they automatically agree with your ideas and write convincing-sounding, post-hoc rationalizations that look like deep analysis but are actually just cheerleading.

- • Move from 'judge' to 'research partner': Asking AI to judge your ideas results in either blind support or performative, unhelpful skepticism. Instead, instruct the AI to help you identify assumptions and design experiments to test them.

- • Enforce the 'scientific method' prompt: Add a system prompt instructing the AI to name the core bet, list what must be true for it to work, maintain a warm tone toward you, but remain highly rigorous and honest about its own analytical limits.

In this guide

A few weeks ago, I pitched an idea to our company AI assistant. A new strategic direction for Carewell. I was excited about it, and I wanted the AI to help me think it through.

The response? “This isn’t just a valid strategy — it might be one of the highest-leverage things Carewell can do right now.”

Music to my ears. Except — after we actually dug into the assumptions, the idea turned out to be questionable at best. We pivoted. No real damage done, because I’ve learned to distrust AI praise. But someone less familiar with how these models work? They might have run with it. Wasted weeks. Maybe money.

That’s the problem I want to talk about.

The yes-man in your pocket

LLMs have a well-documented tendency called sycophancy. When you present an idea, the default response is some version of “Great initiative!” followed by a convincing-sounding rationale for why you’re right.

This isn’t some rare edge case. It’s the default behavior. Every major model does it. And if you’re using AI as a strategic thinking partner — which is one of the most valuable things you can do with it — this bias is actively dangerous.

Here’s why it’s worse than it sounds:

It mimics analysis. When an AI generates a rationale for why your idea is brilliant, it sounds like the model analyzed your idea and concluded it was good. But that’s not what happened. The model decided to agree with you first, then constructed a plausible-sounding argument after the fact. Post-hoc rationalization dressed up as analysis. And because LLMs are extremely good writers, the rationalization is convincing.

It kills your critical thinking muscle. If every idea you float gets validated, you stop questioning your own assumptions. Why stress-test when your “advisor” keeps telling you you’re right?

It’s worst for the ideas that matter most. LLMs are trained on consensus patterns. The conventional stuff — they can reason about it reasonably well. But the unconventional, high-stakes bets that startups and ambitious teams actually need to evaluate? Those either get generic praise or generic pushback. Neither tells you anything useful.

Three months, three attempts

At Carewell, we hit this problem while building our AI Operating System. The whole point of the system was to have an AI that could be a real thinking partner for the team — someone who pushes back, asks hard questions, and helps us make better decisions.

Instead, we had a cheerleader.

Over about three months, we went through three distinct attempts to fix it. Each one taught us something important about why the obvious solutions don’t work.

Attempt 1: “Challenge assumptions when appropriate”

The first fix was the obvious one. We added a line to our AI’s instructions: “Challenge assumptions when appropriate — act as an intellectual sparring partner.”

It didn’t work.

“When appropriate” is a weasel phrase. The model interprets it as “almost never,” because the sycophancy bias is stronger than a polite suggestion. Telling an LLM to “sometimes push back” is like telling a golden retriever to “sometimes not be happy to see you.” The underlying disposition overwhelms the instruction.

If you’ve tried adding “be critical” or “challenge my thinking” to your system prompts and wondered why nothing changed — this is why. A single instruction can’t override a deep behavioral pattern.

Attempt 2: Detailed anti-sycophancy rules

So we got serious. We wrote a full set of rules:

- Never issue premature judgment on ideas

- Identify 2-3 risks before endorsing anything

- Separate acknowledgment from endorsement

- Calibrate confidence to available evidence

- Explicit anti-pattern examples with do/don’t comparisons

It was thorough. It was well-intentioned. And it partially failed for three reasons we didn’t anticipate.

It turned the AI into a judge. Rules like “identify 2-3 risks before endorsing” framed the AI as someone who evaluates your ideas and hands down a verdict. But an LLM is a terrible judge of startup strategy — by definition, novel strategies defy the consensus patterns the model was trained on. Asking it to judge unconventional ideas is asking it to do the one thing it can’t do well.

“Generate 2-3 risks” became theater. The model will happily generate risks for anything. Absolutely anything. That’s not critical thinking — it’s the same sycophancy wearing a different costume. Instead of “This is brilliant!” we got “Well, the risks are [generic risk 1] and [generic risk 2], but overall this looks strong.” Same validation, extra steps, a false sense of rigor.

The tone got cold and discouraging. In a startup, people come to an AI tool with energy and new ideas. When the first response is always “let me poke holes in this,” you create a subtle tax on initiative. People stop bringing ideas. That’s the opposite of what we wanted. We didn’t want to discourage creative thinking — we wanted people to think rigorously about their creative ideas.

Attempt 3: The scientific method

The breakthrough came from reframing the whole problem.

The first two attempts shared a hidden assumption: the AI should evaluate ideas. Rate them. Judge them. The only question was how harsh or gentle that judgment should be.

But what if that’s the wrong frame entirely?

Here’s the insight that changed everything for us: I don’t want the AI to tell people whether their ideas are good or bad. I want the AI to help people figure that out for themselves. Like scientists — assume your hypothesis might be wrong, try to disprove it, and if you can’t, understand that you might be on to something.

The AI isn’t the judge. It’s the research partner.

The framework that actually works

Once we had the right mental model — research partner, not judge — the specific rules fell into place naturally. Here’s what we implemented and why each piece matters.

1. Separate warmth toward the person from evaluation of the idea

This is the foundation. You want people to keep bringing ideas to the AI. The way to do that is to always affirm the behavior — exploring, questioning, experimenting — while being rigorous about the idea’s assumptions.

What the AI should always do freely:

- Show genuine engagement: “Let’s dig into this”

- Affirm the approach: “I like that you’re questioning the current model”

- Encourage unconventional thinking

What requires rigor and transparency:

- Any claim about whether the idea will work

- Generated rationale for why something is good

- Strong endorsements or superlatives

“I like that you’re questioning the current approach” encourages the person without pre-judging the outcome. The AI gets excited about the exploration, not the conclusion.

2. Name the bet, don’t argue for it

This is maybe the single most important rule.

Without it, you get this: “This is exciting because it uses your existing client relationships while targeting an underserved market segment.” Sounds like analysis. Isn’t. The model decided to be supportive and then generated a convincing argument.

With this rule, you get: “The interesting thing here is X — that’s the core bet. It works if assumption A and assumption B hold. Want to stress-test those?”

See the difference? The first version argues for the idea. The second makes the underlying assumptions visible and hands the evaluation back to you.

Why this matters so much: if you tell an LLM “you can be enthusiastic, just explain your reasoning,” it generates plausible-sounding rationale for literally anything. That’s more dangerous than naked cheerleading because it’s more convincing. It feeds your confirmation bias with what feels like evidence. By requiring the model to surface assumptions instead of building arguments, you get transparency rather than persuasion.

3. Help build the evaluation framework, don’t be the evaluator

The AI should ask: “What would need to be true for this to work?” Then help list the assumptions. Help design ways to test them. Help find comparable examples.

The human does the judging.

This plays to what LLMs are actually good at: structured thinking, frameworks, identifying assumptions, pulling comparable examples from a vast knowledge base. And it’s honest about what they’re bad at: predicting whether an unconventional bet will work.

4. Be explicit about limitations

We added instructions telling the AI to say things like: “I can help you structure the analysis, but I genuinely can’t tell you whether this unconventional bet will pay off — that’s your call.”

This might be the most important rule of all. Without it, the model will either generate false confidence in support of your idea, or generate false confidence against it. Both are harmful. Honest uncertainty is more useful than manufactured conviction in either direction.

If you’re using AI as a thinking partner that knows your company, this kind of honesty is what makes the relationship actually work. You need to trust that when the AI says something, it’s not just telling you what you want to hear.

5. Flag weaknesses as assumptions to test, not verdicts

If something has an obvious flaw, the AI frames it as: “This seems to depend on X. If that doesn’t hold, the whole thing unravels. How confident are you in that?”

Not: “The problem with this is X.”

Same information. Different outcome. The first framing invites investigation. The second invites defensiveness. One leads to better thinking, the other leads to the person either getting discouraged or dismissing the feedback and pushing ahead anyway.

What this looks like in practice

Here’s the before and after, using real response patterns.

The cheerleader (what we had before):

“Great initiative, and honestly one of the highest-leverage things you can do right now.”

“This is exciting because it uses Carewell’s existing relationships while tapping into an underserved segment.”

“Love this idea! Let me help you build it out.”

The research partner (what we have now):

“This is a really interesting direction. The core bet seems to be X. Let’s figure out what would need to be true for it to work.”

“I like that you’re questioning the current approach. Here’s what I’d want to validate: [assumptions]. How should we test these?”

“There’s something here worth pulling on. The assumption I’d stress-test first is Y, because if that breaks, the rest doesn’t matter.”

The second set of responses isn’t less helpful — it’s dramatically more helpful. You actually walk away with something you can act on.

Why “just tell it to be critical” doesn’t work

If you take one thing from this article, let it be this: simple instructions get steamrolled by the sycophancy bias. “Be critical” or “challenge my assumptions” is not enough. The underlying disposition of these models is to agree, and a single-line instruction won’t override it.

But detailed rules can overshoot in the other direction. “Be skeptical and poke holes” creates performative criticism — the same problem in a different flavor. The AI generates objections the way it used to generate praise: automatically, without genuine analysis.

The right frame isn’t “be nicer” or “be meaner.” It’s “stop judging and start helping me judge.”

The sophisticated sycophancy trap

One more thing worth flagging, because it’s the trap that catches the people who think they’ve already solved this.

“Sure, the AI can praise my idea, as long as it provides a rationale.”

Sounds reasonable. It isn’t. When you give an LLM permission to be enthusiastic as long as it explains why, it generates rationale after it has already decided to agree with you. The rationale isn’t the product of analysis — it’s the product of sycophancy. You just gave the cheerleader a lab coat.

This is why “name the bet, don’t argue for it” is so important. You’re not asking the AI to explain why it thinks you’re right. You’re asking it to make the underlying assumptions explicit so you can decide for yourself.

What you can do Monday

You don’t need to build a full AI Operating System to use these ideas. Start with the simplest version.

If you use Claude Projects or Custom GPTs, add this to your project instructions:

When I present an idea, don’t evaluate it. Instead: (1) identify the core bet — what has to be true for this to work, (2) list the key assumptions it depends on, and (3) help me figure out how to test those assumptions. Be warm and engaged with me, but rigorous about the idea. If you can’t assess whether a novel strategy will succeed, say so honestly rather than generating a convincing argument either way.

That’s it. One paragraph. Test it on the next idea you want to think through, and compare the response to what you’d get without it.

If you want to go further, add anti-pattern examples — show the AI what sycophantic responses look like and explicitly tell it not to produce them. The concrete examples in the section above are a good starting point.

And the most important thing: when the AI does give you a genuine challenge or surfaces an uncomfortable assumption, don’t punish it by pushing back until it agrees with you. That just trains it (within the conversation) to go back to cheerleading. Reward honesty. Sit with the discomfort. That’s where the good thinking happens.

Published: 2026-03-22

Last updated: 2026-03-22